DBSCAN

- Clustering Techniques Overview

- DBSCAN Algorithm Steps

- Density-Based Clustering Methods

- Evaluation Metrics for DBSCAN

- Advantages of DBSCAN

- Disadvantages of DBSCAN

- Summary of DBSCAN

Clustering with dbscan is a method

for unsupervised learning that groups data items according to their patterns.

Various clustering methods exist, utilizing differential evolution techniques.

Fundamentally, all clustering methods follow a similar approach by initially

computing similarities and then using this information to group data points

sharing similar attributes or properties. The DBSCAN approach, or Density-based

Spatial Clustering of Applications with Noise, is the main focus of this field.

Clusters represent

regions with dense concentrations of data points, delineated by areas where

point density is comparatively lower. DBSCAN operates on the fundamental

concepts of "clusters" and "noise." It employs a local

cluster criterion, particularly density-connected points.

DBSCAN, or Density-Based Spatial Clustering of Applications with Noise, categorizes data points according to their local density within a defined radius ε. Points situated in areas with low density between two clusters are classified as noise. The ε neighborhood of an object refers to the region within a distance ε from that object. A core object is identified if its ε neighborhood includes at least a specified minimum number, MinPts, of other objects.

Why DBSCAN?

Approaches like as

hierarchical clustering and partitioning are effective when used to convex or

spherically shaped clusters. Therefore, it can be concluded that the method

proves effective for datasets featuring tightly clustered and distinct groups.

Moreover, the presence of outliers and noise within the dataset significantly

influences the results.

In real life, the data may contain irregularities, like:

- Clusters can be of any shape, we see the below image.

- Data may have noise.

In the provided image, the dataset exhibits clusters with non-convex

shapes and includes outlier points. Finding clusters accurately in datasets

with outliers and unevenly shaped data is a challenge for the k-means method.

Real-world Example for DBSCAN

Let's Assume that a megacity has a downtown and many buildings. This downtown is famous for having a wide variety of shops, from busy boutiques to attractive cafes. But the district's appeal increased along with the difficulty of controlling its ever-changing surface..

To face these challenges

we hired Alex, a skilled urban planner, his work is to revitalize the downtown

plaza. Knowing that he needed a more data-driven approach, Alex turned to the DBSCAN

clustering algorithm to analyze the district’s spatial data.

Alex started by

collecting data on various aspects of Downtown Plaza including foot traffic,

business types, and customer demographics, after gaining this information, Alex

applied DBSCAN to identify the cluster of similar businesses and areas with

high foot traffic.

By using DBSCAN, Alex

discovered several distinct clusters within the downtown plaza. He found a

cluster of trendy cafes and eateries, a cluster of boutique shops, and even a

cluster of street performers and artists. Additionally, DBSCAN highlighted

bustling intersections and pedestrian hotspots where foot traffic was highest.

Using this information, Alex now can develop a strategic plan to enhance Downtown Plaza. He proposed pedestrian-friendly zones around high-traffic areas, encouraging outdoor seating and street performances. He also suggested promoting collaboration among businesses with the same clusters, fostering a sense of community and synergy. here we study about dbscan clustering example.

Parameters Required For DBSCAN

Algorithm

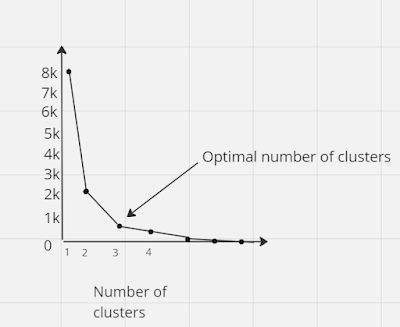

EPS: This parameter is

used to specify the near vicinity of a data point. Two points that are

separated by 'eps' or less are said to be in the same neighborhood. Selecting a

low value for eps may cause a sizable percentage of the data to be regarded as

outliers. On the other hand, clusters may combine if eps is too big, resulting

in the majority of data points falling into a single cluster. Using the

k-distance graph is one method for calculating the eps value.

MinPts: MinPts represents

the least number of neighboring data points within the specified eps radius.

When dealing with larger datasets, it's advisable to choose a higher value for

MinPts. Typically, MinPts is found by ensuring that it is more than or equal to

D+1. This is done by using the dimension count (D) of the dataset. For MinPts,

a minimum value of 3 is typically seen to be ideal.

In the algorithm there

are 3 different verities of data points they are:

Core point: A data point

is deemed a core point if its epsilon (ε) radius contains at least MinPts

surrounding points.

Border Point: A border

point is characterized as having fewer surrounding points inside its epsilon

(ε) radius than MinPts, but sharing an ε-neighborhood with a core point.

Noise or outliers: it is a point that is not a core point or border point.

Steps used in the DBSCAN algorithm

- Initially, the algorithm identifies all neighboring points located within the ε radius of each data point and identifies core points, which are those with more than MinPts neighbors.

- To house core points that are not presently assigned to a different cluster, develop a fresh cluster.

- Next, the algorithm proceeds recursively to identify all density-connected points that are linked to the core points. The density link between points 'a' and 'b' is formed when there is another point 'c' nearby with enough neighbors and both 'a' and 'b' are within the distance of ε. Based on the assumption that 'b' is a neighbor of 'a' if 'c' is a neighbor of 'd', 'e' is a neighbor of 'b,' and so on, a hierarchical structure is formed.

- After that, the program looks at the dataset's remaining unexplored locations. Any points that were not assigned to a cluster in the previous operations are referred to as "noise".

Pseudocode for DBSCAN clustering

algorithm

below is the Pseudocode for dbscan this pseudocode can be used for dbscan clustering algorithm Python code development

DBSCAN (dataset, eps,

MinPts){

# cluster index

For each unvisited point p

in the dataset {

Mark p as visited

# find neighbors

Neighbors N = find a neighboring points of p

If |N| >= MinPts:

N = N U N' (N union N')

If p' is not a member of any cluster:

Add p' to cluster C

}

Major features of Density-Based Clustering

- Density-based clustering is a scan method.

- It needs density parameters as a termination.

- It is used to manage noise in data clusters.

- Density-based clustering is used to identify clusters of different sizes.

Density-Based Clustering Methods

OPTICS, short for

Ordering Points To Identify the Clustering Structure, is instrumental in

delineating the density-based clustering structure within a dataset. It sequentially

organizes the database, highlighting the density-based clustering patterns

under different parameter settings. OPTICS techniques are beneficial for both

automated and interactive cluster analysis tasks, facilitating the detection of

inherent clustering patterns within the data.

DENCLUE, developed by Hinnebirg and Kiem, is another density-based clustering method. It offers a concise mathematical representation of clusters with irregular shapes within high-dimensional data sets. Particularly useful for datasets containing substantial noise, DENCLUE excels in describing arbitrarily shaped clusters amidst noisy data.

Evaluation Metrics For DBSCAN

Algorithm In Machine Learning

We assess the effectiveness of the DBSCAN clustering approach using the Silhouette score and the Adjusted Rand score. A data point is considered well-clustered if its Silhouette score is about 1 (ranging from -1 to 1), which indicates that it is close to points within its own cluster and far from points outside of it. Conversely, a score close to -1 indicates poorly grouped data, and a result close to 0 indicates an overlapping cluster. Lastly, a Rand score, absolute or modified, ranges from zero to one. The below points signify exceptional recovery, whereas scores above 0.8 imply very good recovery. When the score is less than 0.5 the cluster recovery is poor.

When should we use DBSCAN Over

K-Means In Clustering Analysis?

When we are not certain about the cluster's number then we should use

DBSCAN over K-means because K-means need predefined numbers for clusters. DBSCAN can manage arbitrary form clusters far better than k-means, hence we should also utilize it when we are unsure about the cluster's shape. Furthermore, as DBSCAN can handle outliers and noise far better, we should also utilize it when the data contains a lot of them.

Advantages of DBSCAN

DBSCAN has several

advantages:

- Robust to outliers: DBSCAN can handle noise and outliers effectively. It doesn’t force data points into clusters if they don’t fit well.

- DBSCAN does not require cluster count to be pre-specified. Different from K-means, DBSCAN finds clusters based on data density rather than requiring a set number. This flexibility allows DBSCAN to adapt to the inherent structure of the data without imposing a fixed cluster count.

- DBSCAN excels at handling clusters with diverse shapes and sizes. Unlike certain algorithms limited to spherical clusters, DBSCAN can effectively detect clusters of arbitrary shapes and densities. This versatility allows it to capture complex patterns present in the data, irrespective of their geometric configuration.

- Flexibility in defining clusters: DBSCAN doesn’t assume clusters to be globular or with a specific shape. It identifies clusters based on density-reachability, allowing for more flexible cluster definitions that make cluster dbscan.

- Works well with varying densities: it can identify clusters of varying densities, adapting to regions of higher and lower density in the data.

- Efficient in processing large databases: it’s scalable and efficient in handling large datasets by focusing on the neighborhood of each point rather than the entire dataset.

- Minimal sensitivity to

parameter choices: while it has parameters like epsilon and minimum points,

their values can often be chosen based on domain knowledge or heuristics

without significantly impacting results.

- Natural handling of noise: it explicitly labels points that do not belong to any cluster as noise, proving a clear distinction between actual clusters and noisy data.

- Good for discovering clusters of complex shapes: Especially useful in scenarios where clusters might not be easily separable or have convoluted shapes in higher-dimensional spaces.

Disadvantages of DBSCAN

- One important feature of DBSCAN is parameter sensitivity; its efficacy depends on the careful choice of parameters such as epsilon (ε) and the minimal number of points required to form a cluster (minPts). Mistakes in parameter selection might result in less-than-ideal segmentation results, sometimes leaving clusters either too separated or too fragmented. Thus, finding the right balance between these parameters is crucial for achieving desirable clustering results.

- Difficulty with varying density and high-dimensional data: DBSCAN may struggle with datasets where clusters have varying densities or when dealing with high-dimensional data. It might not perform optimally in these scenarios without careful parameter tuning.

- Struggles with very large datasets: while it’s efficient in handling large datasets, extremely large datasets might pose computational challenges, especially in situations where the data does not have clear density separations.

- Requires careful preprocessing: prior normalization and scaling of data might be necessary for DBSCAN to perform effectively, as it calculates distances between points. Inaccuracies in these steps might affect clustering results.

- Difficulty handling data of uniform density: in cases where the data is uniformly dense, DBSCAN might not be able to distinguish meaningful clusters from noise effectively.

- Not ideal for clusters with varying densities and sizes: while it’s robust to arbitrary shapes, clusters with significantly different densities or sizes might be challenging for DBSCAN to cluster effectively.

- DBSCAN's performance can be influenced by the selection of the distance metric used, such as Euclidean distance. Different datasets may necessitate the use of specific distance measures tailored to their characteristics. This sensitivity to the choice of distance metric can introduce variations in the clustering outcomes produced by DBSCAN, emphasizing the importance of selecting an appropriate distance measure to suit the dataset's characteristics.

Applications

DBSCAN applications are:

Cluster Analysis: DBSCAN is widely used in cluster analysis, a subfield of data mining and machine learning. It is capable of identifying any size and shape of cluster in geographical datasets.

DBSCAN Anomaly Detection: DBSCAN may be used to examine datasets for anomalies or points that are not part of any cluster or are situated in areas with low population density. It reliably identifies outliers without assuming anything about the distribution of the data. we often search anomaly detection dbscan on the internet because it is widely used for this.

DBSCAN is widely used in geographic information systems (GIS) for spatial data analysis, which involves locating regional clusters of events or phenomena, such as epidemics or crime hotspots.

Image Segmentation: DBSCAN is an effective image processing method that separates images into regions of similar hue or intensity. It makes it easier to identify objects or regions of interest in images.

Customer Segmentation: Using transactional data, DBSCAN may assist businesses with customer segmentation tasks, such as identifying groups of customers with comparable purchasing patterns or preferences.

Recommendation Systems: DBSCAN may be utilized in recommendation systems to categorize individuals or goods based on similar attributes. Enhancing recommendation accuracy may be achieved by dividing customers into subgroups based on common preferences.

Whether analyzing social networks or any other type of network, DBSCAN may be used to find clusters of nodes with a high density of connections.

These examples highlight how DBSCAN's ability to recognize clusters of any form, control noise, and employ fewer parameters than more traditional clustering algorithms like k-means shows its flexibility in many areas.

Summary

DBSCAN stands out as an unsupervised clustering technique renowned for its adeptness in recognizing clusters based on density rather than predefined cluster numbers. It operates without needing the user to specify the cluster count in advance and exhibits resilience against outliers. DBSCAN accomplishes clustering by identifying densely populated areas, delineating clusters where points are closely packed, and demarcating them from regions of sparse density. If we want to build a machine learning model using dbscan then we can write dbscan algorithm Python code. However, its efficacy can be influenced by parameter selections, especially when dealing with clusters of varying densities or datasets with high dimensions. Additionally, it may necessitate meticulous preprocessing steps to yield optimal results. Despite these challenges, DBSCAN excels in handling clusters with irregular shapes and effectively mitigating noise presence within datasets.